It also serves as the semantic engine that provides smart search features, such as faceted navigation, actionable results, and related search. It enables crawling, indexing, and the security service. Runtime server, a metadata-driven runtime engine that serves as an integration framework between enterprise data sources and Oracle SES. Search development framework, such as Oracle Enterprise Crawl and Search Framework (ECSF), that supports the integration of applications with search engines Source system, such as a relational database, where the searchable information resides Search engine, as provided by Oracle Secure Enterprise Search ( Oracle SES), which provides the fundamental search capability that includes indexing, querying, as well as some value added functionalities such as security. I would simply choose something different.Oracle Fusion Applications search functionality is fundamentally made possible by the integration of three systems, each playing a role in forming the complete search platform: If I wouldn’t really like the software, I wouldn’t bother complaining about the issues.

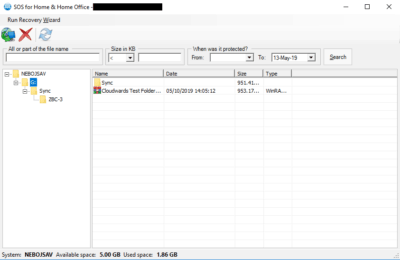

Duplicati features are absolutely awesome. We’re not there with the Duplicati yet.Īnyway, as mentioned before. And it’s joyfulyl rare, that file system becomes absolutely and irrecoverably corrupted. Sure I’ve had similar situations with ext4 and ntfs, but in most of cases, that’s totally broken SSD or “cloud storage backend”. As well as recovering from that, has been usually nearly impossible. And at times it’s also blown up without the hard reset.

Currently the backup works out mostly ok, but you’ll never know when it blows up. And if the software is really bad, then the file system gets corrupted during normal operation even without the hard reset. If system is hard reset, journal should allow on boot recovery and even if that fails, there should be process to bring the consistency back, even if that wouldn’t be as fast as the journal based roll forward or backward. FIrst the primary issue should be deal with efficiently and automatically, and even if that fails, then the secondary issue process should be also automated or manual, but work in some adequate and sane way.Īs example if we use file system or databases. On production ready software both of those recovery situations should work. First something goes slightly a miss, and then the recovery process is bad, and that’s what creates the big issue. Or when the restore takes a month instead of one hour and still fails.īut many of those issues are linked to secondary problems. You don’t ever know, when you can’t restore the data anymore. > Which just means that over all reliability isn’t good enough for production use. I see problems every now and then, and I’ve got automated monitoring and reporting for all of the jobs.īut there are fundamental issues, and things which really shouldn’t get broken, do get broken, as well as the recovery from those issues is at times poor to none. As well I try to test the backups around monthly, I’ve fully automated the backup testing. I’ll run 100+ different backup runs daily. These are exactly the things which shouldn’t be manually handled. Or I could have manually try to delete more files, but that would have probably been uncertain outcome. Probably very small operations would have been enough to fix the backup set, but that logic is missing from the software. I’m forced to completely recreate the 264 GB data backup set which was over 100 GB as Duplicati files, because those are unrecoverable due to bad software logic.

> All efforts and resources used to create backups, which can’t be restored, are absolutely and literally wasted energy. It won’t work reliably or in a sane way from logic / integrity point of view. Serious things like this, are the exact reason why the software shouldn’t be used in production. Program logic is still very seriously lacking and restore fails. Restore keeps failing, even if backing up the data shows deceptively that it’s all good. Test fails, but there’s no way to remedy the situation, other than deleting everything and starting backup set from clean slate. Test, Repair, List-Broken-Files and Purge-Broken-Files. All repair options are more or less broken. Because Duplicati still fails with latest canary. Well, one way could be testing all fresh files + some old randomly.Īnyway, what then if test shows that something is wrong? There’s pretty much nothing you can do about that.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed